What are systems?

A system is “a set of things—people, cells, molecules, or whatever – interconnected in such a way that they produce their own pattern of behaviour over time.”[1]. These ‘things’ are called elements, and may be people, rocks, soil, trees, air or whatever, and their associated behaviour. The elements are interconnected – i.e. they have relationships, and all elements are mutually influencing – each element affects other elements and vice versa. Thus, a farmer has a relationship with the soil, the behaviour of which s/he influences by applying fertilisers or tilling it. It is often said that a system is more than the sum of its parts, because of the interconnections between elements. A system is never the sum of its parts; it is the product of their interaction.

[D]ividing [a] cow in half does not give you two smaller cows. You may end up with a lot of hamburger, but the essential nature of ‘cow’ — a living system capable, among other things, of turning grass into milk — then would be lost. This is what we mean when we say a system functions as a ‘whole’. Its behavior depends on its entire structure and not just on adding up the behavior of its different pieces.

Draper Kauffman Jr.

The interactions between system elements, patterns will emerge – not at the level of the individual element, but collectively. For example, groups of people in one part of the system may farm in a similar way – because they learn from each other, or social pressures encourage them to do so.

Patterns may be defined as observed regularities within the system. They are a key focus of our approach. Depending on the size of the system we are looking at, different ‘clusters’ of patterns occur at different places in the system. These clusters affect and influence each other, and in combination, add up to the overall behaviour of the system.

If a system is disturbed by an external pressure (e.g. a drought, or an intervention, etc.) then its behaviour changes and a new set of patterns emerge – which in turn, will influence system behaviour. For our purposes, such emergence is very important. If we intervene in a landscape, we ideally want a new set of behaviours to emerge.

Landscapes as complex systems

Landscape systems are complex systems. These are heterogeneous collections of people, institutions, ecosystems, technologies, and meanings whose relations generate emergent patterns. Think of a complex system as all the different ingredients and interactions that make a landscape what it is: farmers and their practices, soil and its organisms, markets and their price signals, traditions and their meanings, rainfall patterns and their variability, technologies and their uses, policies and their enforcement (or lack thereof). None of these elements alone defines the landscape; it is their relationships and interactions that create the patterns we observe.

A complex system is constantly being assembled and disassembled through the practices of actors within it. It is not a fixed structure but an ongoing process – patterns stabilise for a time, then shift as conditions change or new elements enter. When we speak of ‘system boundaries,’ we are really describing where this system’s strongest relationships peter out and other relationships become more influential. These boundaries are fuzzy rather than sharp – a village system overlaps with a watershed system, which overlaps with a regional market system, and so on.

A farmer’s decision is shaped by soil moisture (immediate), market prices (regional to global), cultural norms (historical-local), and climate patterns (planetary). None of these is ‘above’ or ‘below’ the others in some hierarchy. They’re all co-present, all exerting influence through feedback loops that operate at different spatial and temporal ranges. This is what makes landscape systems complex: countless heterogeneous elements whose relationships ripple in all directions.

Systems have purpose. “The purpose of a system is what it does“ Stafford Beer has famously written.[2] System purpose does not emerge from what actors say it is, or what their ambition within the system is. Purposes arises out of millions of micro-interactions between elements in the system that culminate in its purpose. We can only deduce system purpose from its behaviour. Discovering a system’s ‘real’ purpose is important if we are going to influence it.

Complex systems also generate what the strategic foresight literature calls weak signals: early indicators of emerging patterns that are typically drowned out by dominant information flows. A new practice appearing at the margins of a landscape, a subtle shift in how people talk about their resources, a small behavioural change that has not yet compounded into a visible trend – these are weak signals. Complex systems can change both gradually and abruptly, and the gradual change can be deceptive — it may represent slow drift towards a threshold (a ‘tipping point’ which we describe below) beyond which change becomes sudden and difficult to reverse. Weak signals are often the earliest evidence that such a transition is approaching. The standard monitoring and evaluation apparatus is precisely calibrated to miss them, because it measures what the logframe says should be measured, not what is actually emerging. Detecting weak signals requires an information architecture oriented towards surprise rather than confirmation – a point we return to in our discussion of adaptivity and opportunity points.

All complex systems have several important characteristics, which are important to bear in mind:

- They comprise millions of interacting elements.

- These interactions regulate the system – each interaction modifies the system.

- At system level, they display behaviour – which is their purpose.

- Small interventions may yield big results because of the ‘rippling effect’.

- They are non-linear.

- There is a high risk of unanticipated results.

- They display high uncertainty and low predictability

- They can only be understandable in retrospect. Complexity prevents prediction.

- Rigid protocols don’t really work.

- Success once does not mean success again

- There is a high risk of unanticipated consequences.

Understanding system conditions

All landscapes are complex systems – they exhibit self-organising behaviour where patterns emerge from the interactions of many actors without central control. But not all complex systems behave the same way. To understand how landscapes pattern themselves and how we might influence those patterns, we need to examine the conditions that shape system behaviour.

Glenda Eoyang offers a practical approach to this challenge. She argues that there are three fundamental conditions that determine the speed, path, and outcomes of how complex systems self-organise: Containers, Differences and Exchanges (CDE).[3] This CDE framework provides a sufficient way to analyse and diagnose landscape systems. Eoyang characterises these conditions by deploying extremes (as you will see). In reality, most of these conditions exist somewhere in between.

Containers (C): what bounds the system?

The container defines who or what is included in a complex system and constrains the probability of contact among actors. Containers have edges rather than rigid boundaries -think of them as zones where relationships strengthen or weaken rather than hard lines. Eoyang identifies between ‘tight’ and ‘loose’ containers.

Tight containers have relatively clear edges and well-defined membership. There is a high probability that actors within the system will interact – in part because system actors have shared identity or commitment. Examples could be a cohesive community organisation, a formal multi-stakeholder platform, or a members’ club.

Loose containers have fuzzy or ambiguous edges. There is a low probability that actors will interact, and shared identity is weak. For example, an administrative district that does not match ecological boundaries, informal networks, or actors who happen to share a landscape but don’t see themselves as connected. The assemblage exists but barely coheres – relationships are sparse and weak.

Difference (D): what significant distinctions exist?

Difference establishes the potential for change within the system. Significant differences are those that matter to actors and create tensions or opportunities for new patterns to emerge.

In an complex system with high difference, there are many significant distinctions between actors in terms of power, resources, interests, knowledge, values, or goals. High potential for change exists here. High differences can drive either innovation or conflict. Examples might be large power asymmetries between elites and communities, conflicting land-use visions, competing resource claims, or vast differences in education and access to information.

With low difference, there are few significant distinctions. There is high conformity and homogeneity. This brings stability but limited capacity for adaptation. For example, societies of a single culture, farming communities with similar practices, or groups with aligned political interests. When one element fails, all may fail similarly.

Exchange (E): how do actors connect?

Exchange refers to the connections that transfer information, energy, or resources across differences within a container. This is how relationships actually operate – through flows of communication, goods, coercion, knowledge, or influence.

Where there are tight exchanges, changes are fast and predictable. Dense connections exist between actors who have frequent interaction and feedback. For example, daily market interactions, close social networks, or dense communication channels. Changes ripple quickly through the system – when one actor shifts practice, others notice and respond rapidly.

In contrast, where there are loose exchanges, changes are slow and unpredictable. Connections are weak and infrequent, and information flow is limited. For example, occasional meetings, weak ties between distant actors, poor communication infrastructure. Changes remain localized – innovations in one part of the system do not spread because the connections are not there to carry them.

How system conditions create landscape patterns

The CDE conditions interact to shape landscape system behaviour. When exchanges are tight (actors frequently connected), information, resources, and influence flow rapidly. Changes cascade quickly through the system, and predictable patterns emerge. In Norway, for example, communities are highly connected and social pressure creates conformity. Dense feedback loops enforce social norms without central control, creating high predictability through emergent self-organisation.

When exchanges are loose (actors weakly connected), information and influence flow slowly. Changes tend to be localised and do not ripple. Pattern formation is unpredictable. Farmers making independent decisions with minimal interaction create unpredictable system-wide outcomes – each doing their own thing without the connections that would align behaviours.

If differences are suppressed (homogeneity is enforced), there is limited capacity for adaptation. Patterns may be stable, but ‘brittle’. Such complex systems are vulnerable to shocks – when one element fails, all fail similarly. Monoculture agriculture or hierarchical coordination that eliminates diversity reveal suppressed difference and highly brittle systemics.

When difference is engaged productively (diversity is welcomed or encouraged), adaptive capacities are high. Resilience occurs due to ‘redundancy’ – the system becomes more robust and able to withstand shocks when it has multiple, overlapping ways of performing essential functions rather than relying on a single element or pathway. Innovation emerges because of difference. Diversified farming systems with multiple livelihood strategies are far more capable of absorbing shocks.

Diagnosing landscape conditions

For each set of CDE conditions, we can assess the condition of a landscape system by posing the following questions:

Containers

- What bounds this landscape system?

- Who is included or excluded?

- How strong are its edges?

- Are edges imposed externally or self-maintained?

Differences

- What significant distinctions exist between actors?

- Power? Resources? Knowledge? Values? Goals?

- Are differences engaged productively, suppressed, or driving conflict?

- Is homogeneity being enforced?

Exchanges

- How frequently do actors interact?

- What flows between them? (Information? Resources? Coercion?)

- Are exchanges tight (dense, frequent) or loose (sparse, occasional)?

- Are exchanges unidirectional (from powerful to weak) or mutual?

The patterns we observe emerge from how these conditions interact. Understanding CDE helps us identify ‘opportunity points[GK2] ’ for intervention.

System states, heading and tipping points

To understand how complex systems behave over time, we need to distinguish between three related but distinct concepts:

Patterns are the observed regularities in how system elements interact and behave. As we discussed earlier, patterns emerge from the interactions of system elements – like farmers in one area adopting similar practices because they learn from each other. Patterns can shift and change without the system fundamentally reorganising. Patterns are fundamental to system behaviour.

System ‘state’ (what geographers might call a configuration or ordering) refers to when a system’s dynamics are similar across time – a relatively stable configuration of patterns. A system maintains the same state even as individual patterns fluctuate, as long as the overall configuration remains similar. For example, a landscape might remain dominated by monoculture, even if there are small ‘islands’ of diversified organic farming.

When a system moves from one state to another, it is said to ‘tip‘. A good example of a (very sudden) tip is when an ice cube turns to water. You can reduce the temperature by a degree, and nothing happens; then another degree, and again, nothing happens. But then, suddenly, we observe a puddle forming underneath it, and after that, the ice cube melts pretty quickly. There are things going on in the ice cube that we don’t observe – in fact, systems may have tipped before we even know about it.4 Tipping represents a fundamental reorganisation – a qualitative change in how the system operates, not just a shift in direction.

Systems don’t get solved. At best, we hope to shift systems to a healthier state.

Rob Ricigliano

Systems don’t just need things fixed. They need healing — healing of relationships, historic inequities, destructive patterns, and the environment.

Systems are infinite. There is no finish line that can be crossed in days or even a few years. Maintaining healthy systems is an ongoing task.

System heading (what geographers might call trajectory or the direction of becoming) refers to the direction and trajectory the system is moving. Like a ship or an aeroplane has a heading relative to its position now. We judge this heading based on the system’s history and where we are in the present moment. Therefore, it seems plausible that it will continue on its present heading in the absence of some pressure to move it. A system can change its heading – shifting direction gradually – whilst remaining in the same state. Heading is determined by which patterns are strengthening or weakening, which feedback loops are dominant, and how power is flowing through the system. Shifting a system’s heading inevitably redistributes power, costs and benefits among agents — these are trade-offs[GK3] .

When we intervene in a landscape system, we are attempting to change its heading – to move its course so it inclines toward more sustainable, just, or resilient configurations. We do this by influencing patterns: strengthening some (by amplifying feedback), weakening others (by dampening feedback), and introducing new patterns through our interventions. Over time, sustained heading changes can move a system toward thresholds where state changes become possible.

It is important to note that we cannot know where a system will end up. This is a key characteristic of complex systems. We can detect its heading, however, as the system stands right now, in this moment. In any case, there is no ‘ending’ to the system.

Resilience and diversity

Resilience is a much-used systems concept. This refers to a system’s ability to withstand shocks and stresses. It might get knocked off course for a bit, but given time, it returns to its former state.

Resilience, however, depends critically on diversity. In systems where all of the elements are more or less the same, they can display properties that suggest they are impervious to contextual changes. The relationships between them all continue humming along in much the same way. Until they don’t. Because of homogeneity, when one element collapses, they all collapse in much the same way. The 2008 financial crisis, for example, revealed high levels of homogeneity amongst the banks, all super connected, and the collapse was sudden and severe.

Homogeneity is not good for system resilience. Diversity, on the other hand, is good for it – this is because internal risks are contained. If one element and its surrounding connections fails, then because of dissimilar elements and connections, the failure ends there and doesn’t ripple.[4]

Diversity is not merely beneficial for resilience; it is a functional requirement of working in complex systems. A system’s regulatory capacity must match the variety of challenges it faces. A landscape system that lacks diversity – of people, perspectives, knowledge systems, and cognitive styles – lacks the variety needed to generate novel responses to novel challenges. This includes neurodiversity: the non-linear pattern recognition and lateral thinking that institutions tend to filter out are precisely the cognitive capacities that complex situations most demand. Stimulating a system with diversity – introducing perspectives that do not fit the prevailing frame – is itself a form of intervention, because it increases the system’s capacity to adapt.

System interconnections

We have stressed that complex systems are characterised by their interconnections – the relationships between elements. These interconnections operate through feedback loops because they are circular: information about outcomes feeds back to influence future actions.

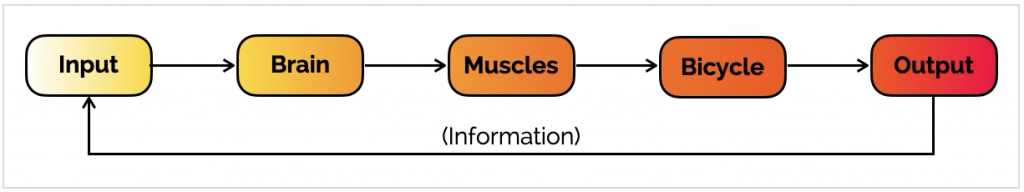

Imagine a bicycle. Together, you can do things that you and the bicycle cannot do separately. Your actions influence what the bicycle does and how the bicycle behaves influences your actions: if it wobbles, you adjust.[5]

By cycling the bicycle, you create stability (a ‘state’) from a situation that would normally be very unstable. If you climb on the bike and do nothing, neither you nor the bike will stay upright. Your brain receives ‘input’ – signals from the environment that you use to decide what you’re going to do – start pedalling, turn, use the brakes, etc. The ‘output’ is the motion of you and the bicycle together. The output, however, creates new information that your brain receives as new ‘input’ – your bike is in a new location, and perhaps you need to turn or stop.

So, information about the system’s output is fed back to the input side. Information that is used this way is called ‘feedback’, and because information about the output feeds back to its input, it is a ‘feedback loop‘.

Dampening feedback loops

This feedback provides stability in a system that would otherwise be unstable. This kind of system acts to cancel out or dampen any changes in the system – it is a dampening feedback loop.

Examples of dampening loops:

- A thermostat maintaining temperature (heat rises → thermostat turns off → temperature drops → thermostat turns on).

- Social conformity pressures (someone deviates → others disapprove → person conforms → approval restored).

- Predator-prey balance (prey increases → predators increase → prey decreases → predators decrease).

Dampening loops create stability and resistance to change. They regulate by constraining variation-keeping systems within bounds.

Amplifying feedback loops

But there is another kind of feedback loop. Imagine that you are speaking into a microphone at a workshop, and you get too close to the loudspeaker. The sound from the speaker goes into your microphone and produces a loud shriek. It only stops when you walk quickly away. Here, a change ripples through the system, affecting one element’s behaviour, which in turn affects the behaviour of other elements, and so on. This is called an amplifying feedback loop because it amplifies or adds to any disturbance in the system.[6]

The spread of a fire, a viral social media post, an epidemic, chemical and nuclear chain reactions – all of these emerge from amplifying feedback. What they have in common is an explosive quality – a tiny initial spark can quickly cause enormous results.

Examples of amplifying loops:

- Population growth (more rabbits → more babies → more rabbits).

- Erosion (bare soil → runoff increases → more soil removed → more bare soil).

- Elite capture (power attracts resources → resources increase power → more power).

- Social movements (one person protests → others join → movement grows → more join).

Amplifying loops create change and instability. They regulate by reinforcing patterns – driving systems toward particular outcomes. In the CDE model, it is differences that generate feedback loops, one difference serving to dampen or amplify others, and yielding a particular systemic state.

All complex systems have both amplifying and dampening feedback loops. The actual behaviour of the system depends on which loop is ‘stronger’. The balance between these loops determines how the system is regulated – which patterns persist, which fade, and where the system heads.

For example, imagine a population of rabbits. Rabbits breed fast, in an amplifying feedback loop: the more rabbits there are, the more babies they make. But, as the rabbit population increases, the number of rabbits that die each year also increases. This is a dampening feedback loop.[7]

If the birth rate (amplifying) is higher, the population will grow; if the death rate (dampening) is higher, the population will decline. The same basic logic applies to many other systems. For example, the growth of knowledge in a society depends on the rate of discovery (amplifying) being greater than the rate of forgetting (dampening). When events happen that cause mass loss of knowledge, knowledge accumulation is knocked back until the amplifying loop regains strength.

Interconnections as regulation

‘Regulation theory’ is the study of how transformations in social relations create new economic and non-economic forms, organised in structures that reproduce a particular mode of capitalist development (a ‘mode of reproduction’). Rather than seeing capitalism as governed only by markets or by the state, regulation theory focuses on the broader ensemble of institutions[GK4] , norms and social compromises that make a specific pattern of accumulation workable for a time.[8]

Regulation theory spotlights the multiplicity of pressures that can influence peoples’ behaviour – what they choose to do, and what they don’t. These modulations operate through the feedback loops we have just described: dampening loops that constrain certain behaviours (e.g., social sanctions discouraging norm violations) and amplifying loops that reinforce others (e.g., success breeding more success). Regulation, then, is the emergent effect of feedback loops operating across the system – shaping which patterns persist and which fade, which behaviours are encouraged and which suppressed.

In a complex landscape system, consider how resource access is regulated:

Dampening loop: community monitoring detects overfishing → sanctions applied → fishing effort reduced → stocks recover → monitoring continues.

Amplifying loop: elites capture market access → accumulate wealth → buy more land → control more markets → further wealth accumulation.

Both are regulatory – they pattern behaviour over time. Which loop dominates determines system heading. This is what we mean when we say regulation operates through feedback loops. Regulation emerges from the dense web of power relations, norms, incentive structures, and institutional arrangements within which actors operate.

This regulatory patterning has a practical corollary that is easy to state but difficult to internalise: if you want to change what people do, change the context in which they do it. You want processes that generate the behaviour rather than mandating it. In a complex system, practices are not freely chosen; they are produced by the regulatory environment. An intervention that targets behaviour directly (awareness-raising, training, technology transfer) without altering the regulatory context that produces the behaviour will, at best, achieve temporary compliance. The behaviour will snap back once the intervention’s direct pressure is removed, because the context that generated it remains unchanged.

Regulation is how power operates – power patterns behaviour through regulatory feedback loops. We explore this relationship further in our section on power[GK5] .

Ripples and cascades

‘Opportunity points’ [GK6] are points in a system where the likelihood of an intervention generating significant systemic change is high — to trigger change that the intervention itself could never achieve directly. Two related but distinct dynamics are at work here.

A ripple is a disturbance that travels outward from its point of origin, dissipating as it goes. Energy diminishes with distance. A ripple may be felt, but its effect fades. Many well-intentioned interventions generate ripples: a pilot project inspires a neighbouring community, which inspires another, but each iteration is weaker than the last. Without something to sustain and amplify the signal, the ripple dies out.

A cascade is different. Each step triggers the next with potentially growing effect. A cascade occurs when the initial disturbance activates amplifying feedback loops already present in the system, or disrupts dampening loops that were suppressing change. The disturbance does not dissipate; it propagates and intensifies. In complex systems work, cascades are associated with tipping points and regime shifts: once certain thresholds or ‘enabling conditions’ are met, the change runs through much of the system rather than fading out.

Ripples may be necessary precursors to cascades – local experiments, norm shifts, or prototypes that prepare conditions for later cascades. What a ripple or cascade will yield is impossible to determine, and unintentional consequences need to be prepared for.

Whether an intervention generates a ripple that fades or a cascade that transforms depends not on the size of the intervention, but on what it encounters: the system’s regulatory landscape — its feedback architecture and power dynamics — determines whether your small perturbation is absorbed or amplified.

This is why understanding the regulatory structure of the system is a precondition for identifying genuine opportunity points. You need to know where the amplifying loops are. You need to know which dampening loops are holding unwelcome patterns in place. And you need to know who controls those loops — because that is where your opportunity point [GK7] sits.

Unintended consequences[GK8]

In complex systems, it is not possible to know what the effects of rippling will be. Each element in the complex system will be influenced and will react in different ways. Such effects are known as unintended consequences. These can have very serious effects.

For example, if we cut a road through a forest to improve trade, it might also make the forest more accessible to loggers. The road triggers amplifying loops (easier access → more logging → more profit → more access), potentially overwhelming dampening loops (forest authority enforcement, community resistance) that might have maintained forest cover. The system is repatterned in ways we didn’t intend.

Understanding which feedback loops dominate – and how interventions might strengthen dampening loops or weaken amplifying ones – is crucial for landscape management. The risk of unintended consequences is very high in complex systems.

Emergence

‘Emergence‘ is when interactions between elements generate patterns that did not exist before – patterns that cannot be predicted from the properties of individual elements alone.; Emergence is self-organising behaviour. While system elements might be doing things for themselves, without conscious thought for the system as a whole, behaviour nevertheless emerges. “It is not,” writes the physicist Doyne Farmer “magic…but it feels like magic”.[9] Life, for example, is an emergent property of chemistry and physics. A termite mound emerges through the interactions and activities of millions of individual termites.

Emergence occurs when a system is repatterned – when new patterns of interaction create new system behaviours. When it occurs, it may signal that system heading has changed, or in more dramatic cases, that a systemic tip has occurred into a new state.

System patterns and behaviour

A starling murmuration is a particularly powerful way of thinking about complex system behaviour. An individual starling flying shows none of the characteristic behaviour that its murmuration shows. It is only when we zoom out to look at the murmuration as a whole that we discern this remarkable behaviour.

Each starling is making decisions about what direction to fly in, whether or not to swoop or to soar. These decisions, it turns out, are based on the behaviour of each bird’s six to seven neighbours. A bird responds to the distance of its neighbour, cohesion (close but not too close!) and speed.[10] The individual starling’s decisions don’t replicate the exact behaviour of a single neighbour but are based on the behaviour of six to seven. This introduces small adjustments to its flight, the effect of which ripple out through the murmuration. As each bird does this, those waves and undulations appear in the overall behaviour of the murmuration[GK9] .[11]

This example shows how decisions made at individual levels, despite being independent, can still yield systemic patterns of behaviour. These patterns, in aggregate, determine the murmuration’s heading – its direction and speed. Similarly, in landscape systems, individual actor decisions create patterns that determine system heading – the trajectory of the landscape over time. How, in society, we can change these systemic behaviours has profound implications for how we derive positive, resilient landscape outcomes.

These emergent patterns arise from countless micro-level interactions between actors in the system – no single one of which is individually decisive, but which collectively shift the system’s disposition. In ecological systems, optimisation occurs through precisely these micro-interactions, because there is no single directing force. The same is true in landscape systems: the patterns we observe are not designed but generated through the accumulation of innumerable small exchanges, negotiations, and accommodations between actors. This has direct implications for how we think about intervention: rather than attempting to mandate change through a single decisive act, the practitioner works to shift the conditions under which these micro-interactions occur, trusting that different conditions will generate different patterns. We explore this logic in the sections on foments[GK10] and opportunity points[GK11] .

Real-world social examples of patterns are:

- Institutional routines: workdays, school timetables, or bureaucratic procedures. These are regular sequences of roles, decisions, and information flows that repeat daily or yearly, structuring who interacts with whom and how.

- Inequality loops (‘success to the successful’): those with more resources gain better opportunities, which increase their resources further, while others are locked out. This is a classic systems archetype seen in education, careers and markets.

- Cultural norms: stable expectations about behaviour (e.g. gendered division of care work, deference to certain professions) that pattern interaction across many contexts; social scientists often talk about these patterns as institutions[GK12] , rules, or norms.

Real-world examples of ecological and socio-ecological patterns are:

- Predator–prey cycles: oscillating populations of wolves and deer: more deer → more wolves → fewer deer → fewer wolves → more deer, and so on; a balancing feedback pattern that produces repeating boom–bust behaviour.

- Reinforcing degradation: overgrazing or deforestation that reduces vegetation, increasing erosion and further reducing vegetation – locking a landscape into a degraded state via reinforcing feedback.

- Spatial patterns: patchy vegetation, animal migration routes, or urban sprawl patterns that persist over time due to underlying resource distributions and movement rules.

Systems and scale

In development work, we often speak of ‘scaling up’. This language suggests a hierarchy: local at the bottom, regional above, national higher still, global at the top. But thinking in hierarchical scales deludes us — it forces us to take our eye off the ball. The problem with hierarchical scale thinking is that it suggests:

- Some actors are inherently ‘above’ others (governments over communities, global over local).

- Power flows vertically (down from higher levels, up through advocacy).

- Influence requires ‘jumping scales’ (reaching higher levels to have impact).

- Local work is less important than national or global work.

But complex landscape systems do not work this way. As we have discussed, systems are constituted through relations of different geographic extent operating simultaneously. A farmer’s decision is shaped by soil moisture (immediate), market prices (regional to global), cultural norms (historical-local), and climate patterns (planetary) – none of these is ‘above’ or ‘below’ the others. They are all co-present, all exerting influence through feedback loops.

Everything is horizontal. The hierarchical ladder is a conceptual instrument that power accumulators use to establish their primacy. When a government claims to be ‘above’ communities, it is performing hierarchy – enacting it through practices (issuing commands, building towers, sitting on elevated platforms) that make the hierarchy seem natural. But the actual relations are horizontal: the government depends on communities for legitimacy, tax revenue, compliance; communities depend on government for services, recognition, protection.

What we call ‘vertical power’ is really accumulated horizontal power – a coordinator who has accreted many actors’ micro-powers through coercion, coordination, or capture. The coordinator is not literally ‘above’ the landscape; they’re densely connected to many actors and positioned to interrupt or redirect flows. This gives them leverage, but the relations remain horizontal.

If we abandon hierarchical scale thinking, how should we think about extending influence? Instead of ‘scaling up/out/deep,’ we should talk about:

- Extending relations: creating connections with more distant elements in the system (not moving ‘up’ but reaching ‘out’ to elements at different geographic extents).

- Replicating systems: supporting emergence of similar systems elsewhere, learning from your experience but adapted to their specific relations (not spreading ‘horizontally’ at same level but helping new systems form)

- Stabilising systems: strengthening internal relations, coding practices into norms, building trust and shared identity (not ‘deepening’ within a level but making the system more robust).

- Rippling: triggering cascades through existing relations, creating feedback loops that amplify desired patterns (not ‘scaling’ but letting influence flow through the network).

The whole intent behind an intervention is to trigger this cascade in positive ways. What we say in the power section [GK13] is that power is a key opportunity point [GK14] to initiate this. When we shift how power is distributed in a system – from concentrated to shared, from accumulation to circulation – the effects ripple.

Our systems thinking

Complex systems display patterns of behaviour that, in aggregate, determine system heading – the direction and trajectory the system is moving. Logically, when we are designing an intervention, we judge the system’s current heading to be a problem – otherwise, there’s no point to the intervention.

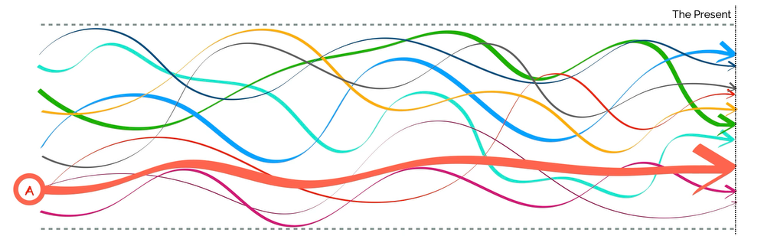

In the first graphic below, we have an system. Its edges are characterised by the parallel dotted lines (remember, edges are fuzzy zones where relationships strengthen or weaken, not hard boundaries). The fluctuating lines between these edges are the trajectories of actors. Whenever one actor’s trajectory intersects with another’s, this is an interaction. Let us say these are human actors. Combined – through their interactions – a pattern of behaviour has emerged.

Actor A dominates this patterning, and therefore strongly influences overall system behaviour and heading. Perhaps Actor A is an influential politician, or a large, open-cast mining company. The size of Actor A’s influence in the system means that it plays a disproportionate role in defining the system’s heading at that moment (note that the present is to the right of the graphic; everything to the left is history). There are many other actors in the system, and they each (and their interactions) influence system behaviour – but to a much lesser degree than Actor A.

In CDE terms:

- Container: the system boundary (dotted lines).

- Difference: Actor A has significantly more power than other actors – a dominant difference.

- Exchange: currently weak and fragmented among non-A actors; Actor A controls key exchanges.

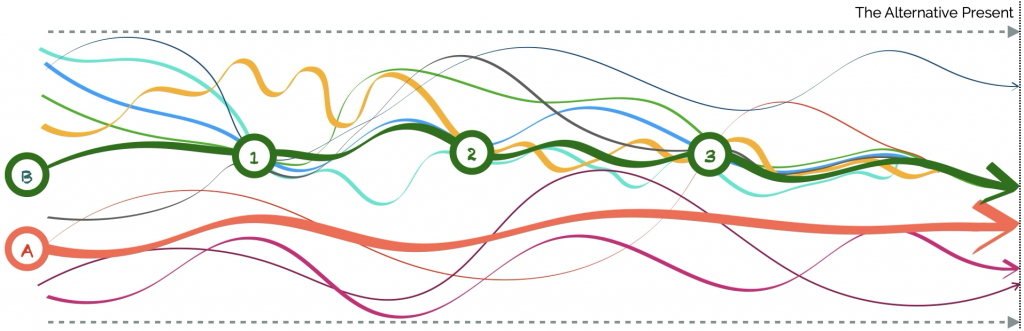

But then we introduce a new actor to the system. Actor B is an intervention. It seeks an alternative present in which Actor A is less influential in the system. In the second graphic, Actor B implements a series of strategies to enable greater levels of collaboration amongst other system actors. At Juncture 1, maybe this is a multi-stakeholder workshop. It pulls many actors into this moment. By the time it reaches Juncture 2, some actors have strayed, but many show up again. At Juncture 3, most of the actors are on board – and they are increasingly working together, influencing system dynamics.

What Actor B has done in CDE terms:

- Strengthened the container: created commitment and shared identity among dispersed actors.

- Engaged difference productively: facilitated dialogue across power asymmetries without suppressing them.

- Tightened exchanges: built dense connections between previously disconnected actors, creating mutual reinforcement.

Combined, all of these actors now out-influence Actor A in terms of determining system behaviour – a change in system heading has occurred. The system’s trajectory has moved – it is now moving in a direction where Actor A’s dominance is reduced and collective action among other actors shapes outcomes. The system has been repatterned.

Repatterning the system in this way does not result in a full shift to the desired alternative present. Compromises and trade-offs, after all, must necessarily happen. But it reduces Actor A’s systemic footprint, which then yields improvement to overall system condition.

Actor B clearly has formidable convening capabilities to foster or enable interaction amongst relatively disempowered actors in the system; it also means that Actor B comes with some important skills that enable it to detect promising opportunity points.

References and further reading

Beer, S. 2002. What is cybernetics? Kybernetes 31(2): 209-219, https://doi.org/10.1108/03684920210417283

Boyer, R. 2002. Introduction. In Boyer, R. and Saillard, Y. (eds), Régulation theory: the state of the art. Translated by Carolyn Shread, pp. 1-10. London: Routledge,

Burkett, I., McNeill, J. et al. 2023. Seeding futures for wellbeing: catalysing collective imagination. Brisbane: Griffith Centre for Systems Innovation. https://research-repository.griffith.edu.au/handle/10072/425156.

Capra, F. And Luisi, P.L. 2014. The systems view of life: a unifying vision. Cambridge: Cambridge University Press.

Cavagna, A. and Giardina, I. 2008. The seventh starling. Significance 5(2): 62-66, https://doi.org/10.1111/j.1740-9713.2008.00288.x.

Eoyang, G.H. 2001. Conditions for self-organizing in human systems. PhD Thesis. Cincinnati (OH): The Union Institute and University, https://s3.amazonaws.com/hsd.herokuapp.com/contents/15/original/gheoyang_dissertation.pdf?1332189639.

Eoyang, G.H. and Holladay, R.J. 2013. Adaptive action: leveraging uncertainty in your organization. Stanford (CA): Stanford University Press.

Holland, J.H. 2014. Complexity: a very short introduction. Oxford: Oxford University Press.

Kauffman, D.L. Jr. 1980. Systems one: an introduction to systems thinking. 2nd Ed. Minneapolis (MN): Future Systems Inc.

Ludwig, D. And Walter, C. 1993. Uncertainty, resource exploitation, and conservation: lessons from history. Science 260 (5104): 17-36, https://doi.org/10.1126/science.260.5104.17.

Marghetis, T. 2022. Can you tell when a system is about to tip? Podcast: Simplifying Complexity October 31 202, https://omny.fm/shows/simplifying-complexity/tyler-marghetis-topic-1.

Massey, D. 2005. For space. London: Sage.

Meadows, D. 2009. Thinking in systems: a primer. London: Earthscan.

Reynolds, C.W. 1987. Flocks, herds, and schools: a distributed behaviour model. Computer Graphics 21(4): 25-34, https://doi.org/10.1145/37402.37406.

Snowden, D., Greenberg, R. and Boudewijn, B. 2021. Cynefin: weaving sense-making into the fabric of our world. Colwyn Bay: Cognitive Edge – The Cynefin Co.

Theise, N. 2023. Notes on complexity: a scientific theory of connection, consciousness, and being. New York (NY): Spiegel & Grau.

Tsing, A.L. 2005. Friction: an ethnography of global connection. Princeton (NJ): Princeton University Press.

Ricigliano, R. 2021. The complexity spectrum: systems change is not for everyone, but making a good choice is. Medium September 27, 2021, https://blog.kumu.io/the-complexity-spectrum-e12efae133b0.

Von Bertalanffy, L. 1969. General system theory: foundations, development, applications. New York (NY): George Brazier.

Waldrop, M.M. 1992. Complexity: the emerging science at the edge of order and chaos. New York (NY): Touchstone Simon & Schuster.

Westley, F., Patton, M.Q. and Zimmerman, B. 2007. Getting to maybe: how the world is changed. Toronto: Vintage Canada.

Unintended: examples of trying to use simple solutions (and interpretations) to solving complex problems

Parachuting cats

In the early 1950s, the Dayak people in Borneo suffered from malaria. The World Health Organization (WHO) had a solution: they sprayed large amounts of DDT to kill the mosquitoes that carried the malaria. DDT is a powerful and long-lasting insecticide that is also poisonous to other animals. The mosquitoes died, the malaria declined; so far, so good. But the impact of the DDT rippled.

First, the roofs of people’s houses began to fall down on their heads. It seemed that the DDT was killing a parasitic wasp that had previously controlled thatch-eating caterpillars. Worse, the DDT-poisoned insects were eaten by geckoes, which were eaten by cats. The cats died, the rats flourished, and people were threatened by outbreaks of sylvatic plague and typhus.

To cope with these problems, which it had itself created, the World Health Organization was obliged to parachute 14,000 live cats into Borneo.

From: Lovins, L. Hunter and Lovins, Amory B. 1995. How not to parachute more cats. The Rocky Mountain Institute. https://rmi.org/wp-content/uploads/2017/05/RMI_Document_Repository_Public-Reprts_G96-01_ParaCats.pdf

Fish for pastoralists

In 1967 Kenya made a request for Norwegian assistance to develop the fisheries of Lake Turkana. A project was formulated and agreed and its first phase started in 1970. The Norwegian Agency for Development Cooperation (NORAD) provided financial and technical assistance, initially focusing on building the Turkana Fishermen’s Cooperative Society, providing fishing boats, gear, and advisory personnel.

A Norwegian delegation visited the area in March 1970 to assess the prospective of a Norwegian assisted fishery project. In their report they concluded that: “The only alternative to nomadic pastoralism is the fishery in Lake Turkana…and this again is the only possibility for the Turkana to achieve settlement, sufficient nourishment and access to money. Settlement is a condition for schooling, health services and other social welfare” (quoted in Kolding, 1989).

In 1975 the Government of Kenya submitted a request to Norway for assistance in building such a plant. In 1976 and 1977, NORAD carried out feasibility studies of a fish processing plant, including evaluations of marketing conditions for the economics of frozen fish production.

Given the remoteness of the lake from potentially lucrative markets, it was further decided that a fish freezing plant was needed, which cost US$2 million (Harden, 1986). A further $20 million was spent on a road that would connect the plant to Kisumu in western Kenya.

The freezing plant would need to reduce fish temperatures from 38 °C (the average daytime temperature around Lake Turkana) to zero, which in turn required immense quantities of power – more than was available in Turkana District. For a while, it was able to run on generators, but whatever the case, this made for extremely expensive fish. The plant operated for a few days, but before the electricity was turned off, converting it “into Africa’s most handsome dried-fish warehouse” (Harden, 1986).

Then there were regular cycles of drought, which occurred every 30 years or so. When the plan was constructed, close to the main fishing areas in Lake Turkana’s Ferguson’s Gulf, the lake was high. Shortly after the fish plant was completed, virtually the whole of the gulf disappeared as a result of drought, and the plant was left kilometres from the lake shore. The research vessel Iji, also provided by the Norwegians, was left stranded and abandoned in the mud of what had been Ferguson’s Gulf (Harden, 1986).

The Turkana are not, traditionally, a fishing people. “If you fish, it means you are poor because you have no livestock …. Mostly, it is people who have lost everything to drought who go fishing, when there’s no other choice.” (Nandapal resident, quoted in Cocks, 2006). Many of the 20,000 Turkana whom the Norwegians had brought to the lake shore, given boats and nets and taught how to fish, were soon stuck without a fishery. Destitute and without their livestock – which had died due to overcrowding and disease on the lake shores – many Turkana turned to food aid (Harden, 1986).

From: Harden, B. 1986. How not to aid African nomads: foreign-funded high-tech programs increase a Kenyan tribe’s problems. The Washington Post April 4, 1986, https://www.washingtonpost.com/archive/politics/1986/04/05/how-not-to-aid-african-nomads/df2edbff-7294-46dd-abf1-fc3dc13b36ff/

Kolding, J. 1989. Appendix A: The history of the Lake Turkana fisheries and the role of TFCS and NORAD. In: The fish resources of Lake Turkana and their environment. M.Phil Thesis, University of Bergen, https://www.academia.edu/9517045/The_history_of_Lake_Turkana_fisheries_and_the_role_of_TFCS_and_Norad.

Smashing Sparrows

China’s Great Leap Forwards had resulted in the imposition of huge agricultural reforms, which had, in turn, resulted in significant declines to food production. The Chinese Communist Party chose to blame pests –flies, mosquitos, rodents and sparrows – for the declines. Each sparrow, they computed, ate 2 kg of grain per bird per year.

The campaign was launched in 1958. As one former school pupil remembered, “the whole school went to kill sparrows. We made ladders to knock down their nests, and beat gongs in the evenings, when they were coming home to roost.” By 1958, an estimated 2 billion sparrows had been killed. In Beijing, the Polish Embassy refused to participate in the targeting of the species and became a refuge for any remaining sparrows. The Polish diplomats declined to let the public into the embassy to kill the birds. Instead, people surrounded the embassy and banged pots and pans for two days, until the sparrows inside the embassy dropped dead from exhaustion. Polish personnel recounted how they had to use shovels to clear out thousands of dead birds.

In April 1960, an ornithologist pointed out that sparrows ate a large number of insects besides grains. The ecological balance had been upset, and bed bugs flourished along with other insect populations. With no sparrows to eat them, locust populations ballooned, swarming the country and compounding the ecological problems already caused by the Great Leap Forward, including widespread deforestation and the misuse of poisons and pesticides. Ecological imbalance is credited with exacerbating the Great Chinese Famine during which 15-55 million people died.

From: Wikipedia, 2025, The four pests campaign, https://en.wikipedia.org/wiki/Four_Pests_campaign

[1] Meadows, 2009.

[2] Beer, 2002.

[3] Eoyang, 2001.

[4] Marghetis, 2022.

[5] Kauffman, 1980.

[6] Kauffman, 1980.

[7] Kauffman, 1980.

[8] Boyer, 2002.

[9] Quoted in Waldrop, 1992.

[10] Reynolds, 1987.

[11] Cavagna and Giardina, 2008.

[GK1]The source of the Kauffman quote is provided in the reference section, but a text box won’t allow an end-note to be inserted. Should probably endnote all citations.

[GK2]Link

[GK3]Link.

[GK4]Link to ‘Governance and Institutions’.

[GK5]Link.

[GK6]Link

[GK7]Link

[GK8]Note 3 boxes at the end of this section that provides examples of unintended consequences. Maybe you want to choose one, two or all for inclusion.

[GK9]Maybe embed a YouTube video with a murmuration?

[GK10]link

[GK11]Link

[GK12]Link to ‘governance and institutions’

[GK13]Link.

[GK14]Link.